FastN8N

Stop fixing AI-generated workflows. Start shipping them.

Technology Stack

About This Project

I gave AI assistants the n8n knowledge they've been missing. A prebuilt database of 500+ nodes, their properties, and documentation so workflows deploy clean instead of breaking on first run.

Project Vision

An MCP server that gives AI assistants deep, structured knowledge of n8n, so they can design and validate workflows without the usual guess-and-check frustration.

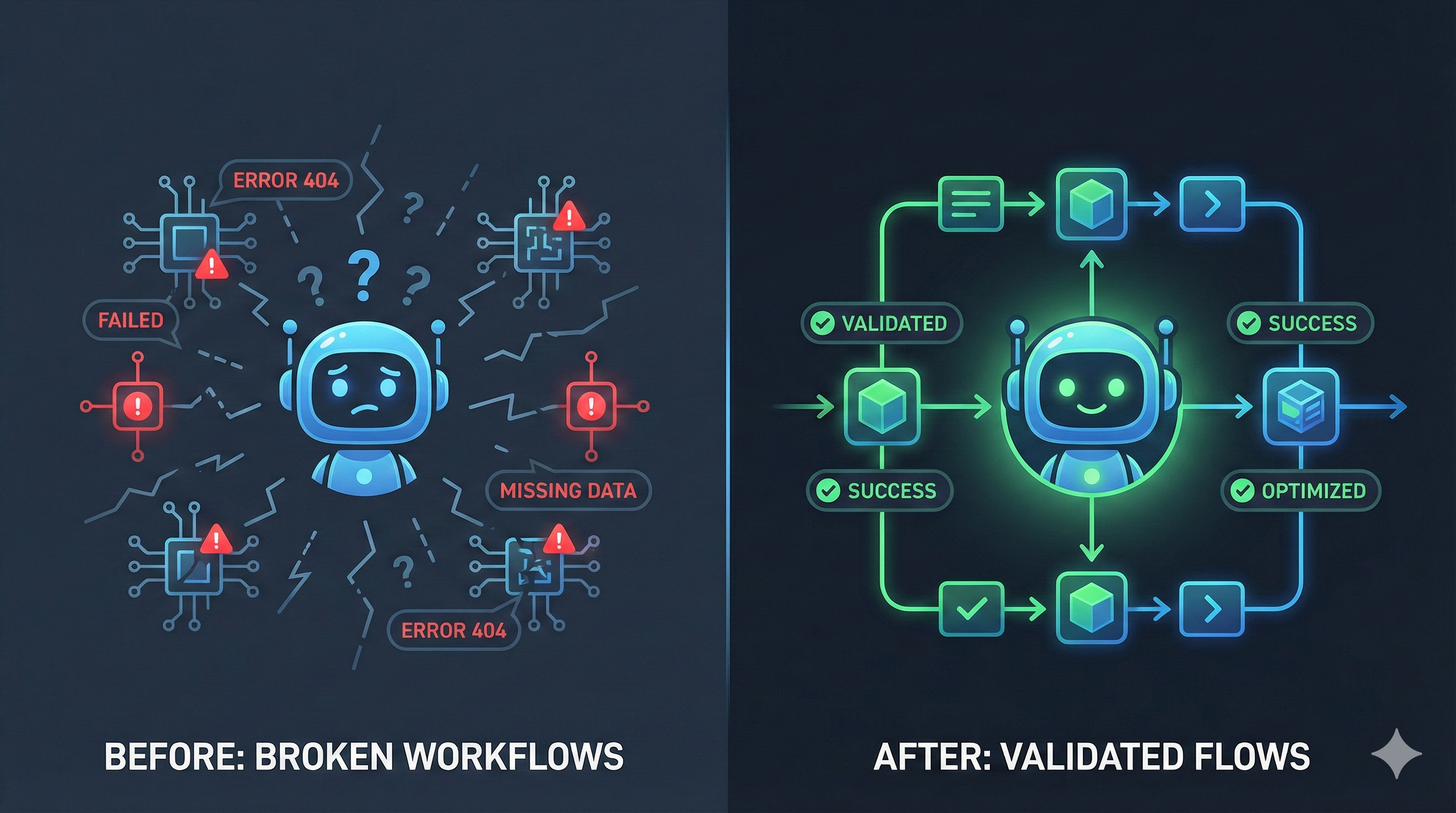

The Problem

AI assistants are great at generating n8n workflows, but they constantly fumble node configurations. They hallucinate properties, pick wrong operations, and produce code that breaks on first run. The fix? Feed them accurate, structured knowledge about how n8n actually works.

What I Built

n8n-MCP ships with a prebuilt database of 500+ nodes (core plus LangChain), their properties, operations, and parsed documentation. AI assistants can query this knowledge to select the right nodes, configure them correctly, and validate everything before deployment.

The key difference from other n8n MCP servers: this isn't just an API wrapper. Most alternatives only do CRUD operations against a live n8n instance. I built actual node intelligence that works offline, so assistants can answer configuration questions without needing a running n8n server.

How It Works

Point your MCP client (Claude, Cursor, Windsurf, Codex) at the server. It exposes tools for node discovery, property lookup, config validation, and optional workflow management when you connect credentials.

Deployment is lightweight: run via npx, Docker, or self-host as an HTTP server for team access. The Docker image strips out n8n dependencies entirely, keeping the footprint small.

Why It Matters

Teams building with AI-assisted automation spend too much time fixing broken node configs and reading docs. n8n-MCP gives the AI the knowledge it needs upfront, so workflows come out right the first time.