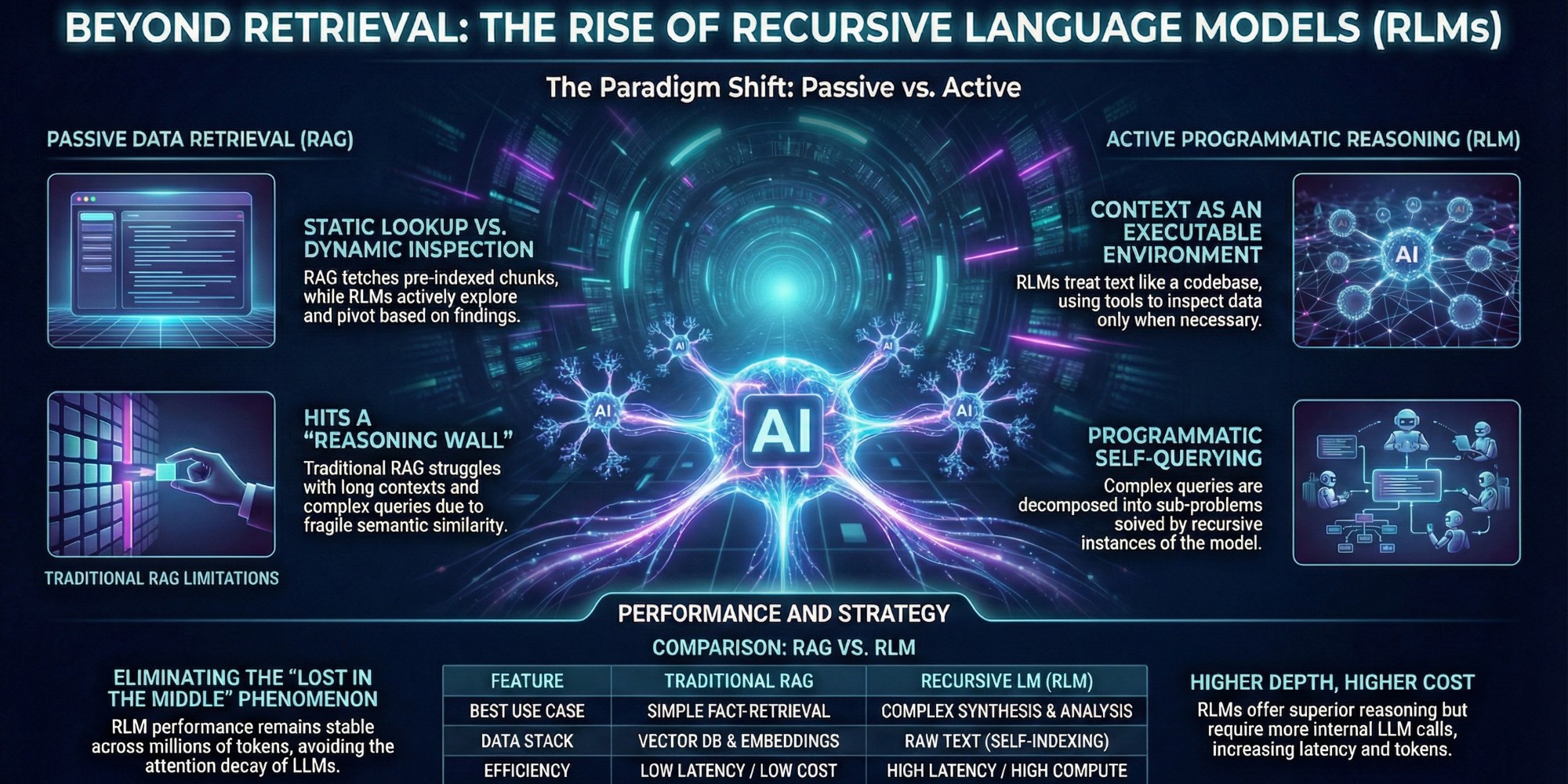

Recursive Language Models vs. RAG

A New Paradigm for Scaling LLM Context Without RAG

The Long-Context Crisis: Why RAG Hits a Wall

For years, we have relied on vector retrieval and dense embeddings as the gold standard for handling long context. The premise is seductive: convert your data into numbers, find the "nearest" numbers to a user's query, and feed that text to the LLM. But this approach rests on a fragile assumption—that semantic similarity equals relevance.

In practice, vector databases often fail at "reasoning-heavy" retrieval. If you ask a system to "find the flaw in the argument presented in Chapter 3 based on the evidence in Chapter 10," a standard RAG pipeline struggles. It retrieves chunks that look like the query, not chunks that answer the logic puzzle. We are hitting a hard ceiling where increasing `k` (the number of retrieved chunks) doesn't improve intelligence; it just adds noise.

Context rot and the attention bottleneck in LLMs

Even when we bypass retrieval and stuff the entire document into massive context windows (now reaching 1M+ tokens), we encounter the "Lost in the Middle" phenomenon.

LLMs are not perfect readers; their attention mechanisms degrade over long sequences, often prioritizing information at the very beginning or end of the prompt while hallucinating or ignoring the middle.

Decoding Recursive Language Models (RLMs)

Treating context as an executable environment

Recursive Language Models (RLMs) flip the traditional paradigm on its head. Instead of trying to shove the context into the model, RLMs treat the context as an external executable environment—similar to a file system or a database. The LLM doesn't "read" the text in a linear pass; it is given a set of tools to inspect it.

Imagine a developer debugging code. They don't memorize the entire codebase. They open files, search for function definitions, and trace logic paths. RLMs emulate this agentic workflow. They operate in a Read-Eval-Print Loop (REPL), generating code or commands to interact with the data only when necessary. This allows the model to handle gigabytes of text without ever needing a context window larger than a few thousand tokens.

The mechanics of programmatic self-querying

The "recursive" magic happens in how the model answers questions. When faced with a complex query, the RLM decomposes it into sub-problems. It might write a script to "read the first 500 words," analyze the output, and then decide to "search for the term 'revenue' in the next section."

If a sub-problem is still too complex, the model calls itself recursively—instantiating a new instance of the LLM to solve that specific chunk. This programmatic self-querying creates a tree of reasoning processes. The model isn't just predicting the next token; it is architecting a solution path, dynamically deciding what to read and what to ignore based on what it finds in real-time.

Mechanism Battle: RLM Dynamic Inspection vs. RAG

Active exploration vs. static document lookup

The fundamental difference between these architectures is passivity versus activity. Traditional RAG is passive: a retriever fetches documents based on a pre-calculated index, and the generator deals with whatever it gets. If the retriever misses the crucial fact because the keywords didn't match, the game is over.

RLMs are active. They perform dynamic inspection. If an RLM looks at a section and realizes the answer isn't there, it can pivot. It can decide, "I need to check the appendix," or "This section references a date, let me search for that date." This active exploration allows RLMs to solve multi-hop reasoning tasks that baffle standard RAG systems, mimicking human investigation rather than database lookups.

Eliminating the external vector database dependency

One of the most attractive operational benefits of RLMs is the simplification of the data stack. RAG requires a heavy infrastructure: chunking pipelines, embedding models, vector databases (like Pinecone or Weaviate), and constant re-indexing strategies to keep data fresh.

With Recursive Language Models, the raw text *is* the index. Because the model navigates the document programmatically, you don't need to pre-compute vectors. This eliminates the "synchronization gap" where your vector store drifts from your source truth. For applications involving rapidly changing data or private, secure documents where you don't want to manage external indexes, this architecture offers a cleaner, self-contained solution.

Critical Analysis: Performance, Cost, and Complexity

Unlocking infinite context reasoning capabilities

The theoretical ceiling for RLMs is massive. Because they process data in chunks—loading only what is immediately necessary into their active window—they essentially unlock infinite context reasoning. You could theoretically point an RLM at a codebase of 10 million lines or a legal discovery dump of 50,000 pages, and it would churn through it.

This capability makes them uniquely consistent. Unlike long-context attention, which degrades, an RLM's performance remains stable regardless of document length. It approaches a 100-page document with the same rigorous methodology as a 1-page memo, making it the superior choice for high-stakes tasks where "hallucination by omission" is not an option.

Managing latency and recursive compute costs

However, there is no free lunch. The "recursion" in RLM translates to compute intensity. A single user query might trigger dozens, or even hundreds, of internal LLM calls as the model decomposes the problem and explores the text. This results in significantly higher latency and token costs compared to a single-shot RAG retrieval. While RAG might return an answer in 2 seconds for $0.01, an RLM might take 30 seconds and cost $0.50 to arrive at a more accurate answer.

The Future Landscape: Adoption and Hybrid Architectures

Choosing between recursion and retrieval for your pipeline

So, which architecture belongs in your stack? The decision comes down to the "Search vs. Reason" spectrum. If your application relies on fact-retrieval—answering "What is our refund policy?" or "Who is the CEO?"—stick with RAG. It is faster, cheaper, and sufficiently accurate for lookup tasks.

Choose RLMs when the task involves synthesis or comprehensive analysis. Use cases like "Summarize the evolution of this character throughout the novel," "Find all loop conditions in this repository that might cause a deadlock," or "Compare the liability clauses across these 50 contracts" are where RAG fails and RLMs shine. The extra cost is justified by the depth of reasoning required.

Toward hybrid agents with hot-swappable memory

The most powerful systems of the future will not be purists. We are moving toward hybrid agents with hot-swappable memory architectures. Imagine an agent that uses RAG to quickly narrow down a dataset of 10,000 documents to the most relevant 20, and then switches to an RLM architecture to recursively deep-read those 20 documents for the final answer.

This "funnel" approach optimizes the cost-latency trade-off. By combining the speed of semantic search with the rigorous inspection of recursive models, we can build AI assistants that are both responsive and deeply intelligent, capable of navigating the growing ocean of enterprise data without getting lost.

Conclusion

While Retrieval-Augmented Generation (RAG) has served as the backbone of modern AI applications, the emergence of Recursive Language Models (RLMs) signals a critical shift from passive retrieval to active reasoning. By treating context as an environment to be explored rather than a buffer to be filled, RLMs solve the "needle in the haystack" problem that plagues even the most sophisticated vector databases.

However, this power comes with a cost. The latency and compute requirements of RLMs mean they aren't a drop-in replacement for every RAG pipeline—yet. As you architect your next generation of LLM applications, the winning strategy will likely involve hybridizing these approaches: using RAG for broad, low-latency information gathering and RLMs for deep, multi-step reasoning tasks. The era of static context is ending; the era of active, programmatic intelligence is just beginning.

References:

Stay in the loop

Get notified when I publish new articles and projects.