The End of the Visual Web: Why AI Agents Need Contracts, Not Pixels

The Web Model Context Protocol (WebMCP) is a browser standard that enables websites to expose structured 'tool contracts' to AI agents. Instead of forcing agents to visually scrape screens to identify buttons and fields, WebMCP provides a logical API layer. This allows agents to interact with websites through defined functions and JSON schemas, resulting in faster, cheaper, and more reliable autonomous actions compared to traditional fragile screen-scraping methods.

The "Visual Simulation" Trap: Why Screen Scraping Is a Dead End

The inefficiency of treating AI agents like faster humans

For the past year, the technology industry has engaged in a collective delusion: treating AI agents as if they were simply faster, tireless humans. To interact with the web, today's agents must "frantically" capture high-resolution screenshots, utilize expensive multimodal models to identify button coordinates, and fumble with simulated mouse clicks. This approach is pathetically primitive. It forces the most sophisticated logic engines we have ever built to interact with the internet through its clunkiest interface—the graphical pixel layer.

We are currently witnessing the "USB-C moment" for the agentic web. Just as universal hardware standards replaced a tangle of proprietary cables, the industry is moving away from visual simulation toward structured interaction. The reliance on computer vision to navigate the web is not a feature; it is a temporary bridge we built because the browser lacked a native language for machines. Continuing to rely on visual processing for logical tasks is inefficient, converting structured data into pixels only to burn computation cycles turning those pixels back into data.

The hidden cost of fragile DOM interactions

Beyond inefficiency, the "visual simulation" model introduces a lethal fragility to enterprise workflows. When an agent relies on screen scraping or raw DOM actuation, a single CSS update or a shifted "Submit" button can render a mission-critical workflow paralyzed. This fragility creates a maintenance nightmare where engineering teams must constantly patch agent instructions to match minor UI tweaks.

The costs are twofold: the direct compute cost of processing millions of visual tokens and the indirect operational cost of debugging broken agent scripts. In a production environment, reliability is the only metric that matters. If an agent fails to book a flight because a promotional banner shifted the checkout button by fifty pixels, the technology is effectively useless for autonomous commerce. The shift we are seeing now—driven by the Web Model Context Protocol—is about moving from probabilistic guessing to deterministic execution. We are replacing the fragility of sight with the certainty of a contract.

Enter the Web Model Context Protocol: Building the Logical Web

Replacing pixels with "Tool Contracts" and structured data

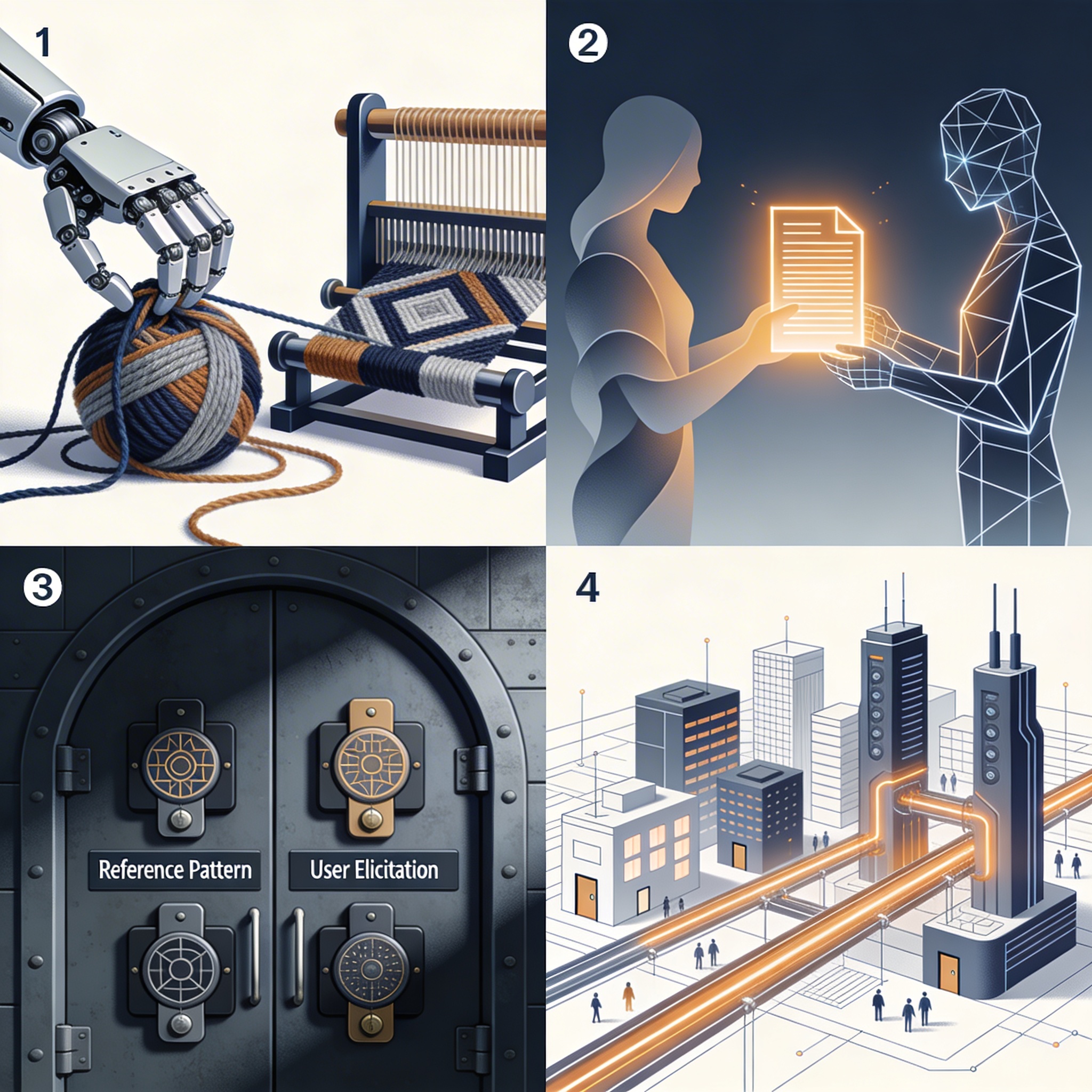

The Web Model Context Protocol (WebMCP) represents a fundamental architectural shift: the bifurcation of the web into a visual layer for humans and a logical layer for agents. Rather than forcing an agent to "see" a page, WebMCP allows a website to expose its capabilities through a Tool Contract—a structured package of natural language descriptions and JSON schemas.

This "API in the UI" approach changes the fundamental relationship between the browser and the model. By utilizing the `navigator.modelContext` registry, a website can expose existing JavaScript functions directly to the agent. When an agent needs to book a flight, it doesn't look for a blue button; it invokes `book_flight()` with precise parameters. Critical to this architecture is that the browser does not expose raw code, which would be a security nightmare. Instead, it brokers a negotiated contract, ensuring the agent understands *what* can be done without needing to understand *how* the pixels are rendered.

How the Declarative and Imperative APIs bypass the GUI

WebMCP operationalizes this logic through two distinct pathways: the Declarative API and the Imperative API. The Declarative API is the workhorse for standard interactions, allowing developers to add simple HTML attributes to existing forms—turning a standard login field into a machine-readable entry point without writing custom JavaScript. It effectively creates a "headless" mode for the frontend that exists alongside the visual interface.

For more complex, dynamic workflows, the Imperative API allows developers to expose rich JavaScript functions as tools. This effectively kills the latency plagued by the "Lost in the Middle" phenomenon, where Large Language Models (LLMs) lose track of context while parsing massive strings of HTML. By bypassing the Graphical User Interface (GUI) entirely, the Imperative API allows agents to execute complex sequences—like configuring a multi-step purchase—in a single, token-efficient round trip. The agent no longer simulates a user; it acts as a client-side application integration.

The Security Paradox: Protecting Users in an Agentic World

Neutralizing the "Lethal Trifecta" of open tabs and external comms

The rise of browser-based agents has introduced a terrifying security scenario known as the "Lethal Trifecta." This condition emerges when three factors align: the agent has access to private data (like an open banking tab), it is exposed to untrusted content (a malicious second tab), and it possesses a channel for external communication. In this environment, a malicious website could theoretically prompt the agent to exfiltrate sensitive routing numbers from the trusted tab without the user's consent.

WebMCP addresses this by strictly enforcing a SecureContext (HTTPS) for all agent interactions, ensuring that the agentic web is not built on insecure legacy infrastructure. However, transport security is not enough. The protocol assumes the agent itself may be compromised or "jailbroken" by prompt injection. Therefore, the security model shifts from trying to sanitize the agent's thoughts to strictly controlling the agent's hands. The browser acts as a sandbox, preventing the agent from executing arbitrary actions across origins.

The Reference Pattern: Why agents should hold keys, not data

To solve the data exfiltration problem, WebMCP introduces the Reference Pattern. This architectural pattern ensures that agents never actually "see" the most sensitive user secrets. Instead of passing a raw account balance or social security number into the model's context window, the website stores the data in origin-specific secure storage and returns a JSON object with a `type: "reference"` property and an opaque ID.

The agent can use this ID to perform subsequent tasks—like transferring funds—but it remains blind to the underlying value. Furthermore, for high-stakes operations, the protocol favors User Elicitation. This forces the browser to trigger a native modal that the agent literally cannot see or manipulate, ensuring a human stays in the driver's seat for critical confirmation steps. This "human-in-the-loop" mechanism is not just a feature; it is a prerequisite for enterprise adoption.

The Economic Case: "Zero-Infrastructure" Integration

Leveraging existing user sessions instead of building new APIs

The most compelling argument for WebMCP is economic, not technical. Historically, building an API for third-party automation required significant backend investment, authentication management, and ongoing maintenance. WebMCP flips this model by enabling a "Zero-Infrastructure" experience. By leveraging the user's existing authenticated browser session and frontend logic, website owners do not need to build separate, expensive API infrastructure for every agentic interaction.

This fulfills the vision originally championed by project pioneer Jason McGhee: "Websites should offer native LLM experiences without API keys or having to foot the bill." The website's existing frontend logic *is* the infrastructure. When an agent acts on a user's behalf, it is simply driving the car that is already parked in the user's driveway. This allows for a collaborative revolution where users and agents work in the same tab simultaneously—handling "uncommitted changes" where an agent drafts a form and the user retains final approval.

Reducing token consumption by eliminating visual processing

From a unit economics perspective, the shift from visual processing to structured contracts is a massive cost saver. Visual agents consume thousands of tokens per step to process high-resolution screenshots, analyze layout, and infer intent. This makes simple tasks prohibitively expensive at scale. By contrast, a Tool Contract requires a fraction of the token count, passing only concise JSON schemas and text descriptions.

The `SubmitEvent.agentInvoked` property allows backends to distinguish between human and machine submissions, enabling the server to respond with clean JSON for the agent while redirecting the human to a visual confirmation page. This efficiency allows companies to deploy agentic features without the massive inference bills associated with multimodal vision models. It transforms the agent from a high-cost luxury into a viable everyday interface.

The New SEO: Optimizing for the Web Model Context Protocol

From human readability to machine callability

We are entering an era where Technical SEO is evolving from making text readable for crawlers to making functions callable for agents. The clarity of your Tool Contract now determines your business's discoverability in an agent-first world. Through the Declarative API, developers use attributes like `tool-prop-description` to ensure agents populate parameters correctly, rather than guessing based on ambiguous field labels.

This goes beyond mere tagging; it is about defining the rules of engagement. Attributes like `tool-autosubmit` act as a toggle for agent autonomy, determining whether an agent can skip the manual click step. Developers can also utilize new CSS pseudo-classes like `:tool-form-active` to provide visual transparency to the user, highlighting exactly which fields the agent is filling in real-time. This visual feedback builds trust, bridging the gap between the invisible logic of the agent and the skeptical eye of the user.

The first-mover advantage for agent-ready businesses

The strategic implication for product leaders is clear: if your site’s tool definitions are ambiguous, agents will simply choose a competitor whose contract is easier to fulfill. In a future where an AI assistant is asked to "book a flight to London," the agent will prioritize the airline that exposes a clean, deterministic `book_flight` tool over one that requires complex visual scraping.

This creates a significant first-mover advantage. Companies that adopt WebMCP early are effectively effectively "whitelisting" themselves for the next generation of automated commerce. Just as mobile-responsiveness became a non-negotiable standard for the smartphone era, "agent-readiness" is becoming the gatekeeper for the AI era. The winners will be those who recognize that their most valuable user experience is no longer the one designed for human eyes.

Conclusion

We are moving from a "Visual Web" of pixels to a "Logical Web" of contracts. The Web Model Context Protocol allows these two layers to coexist, serving both human eyes and machine logic from a single source of truth. As we stop building exclusively for human sight, we must rethink our entire front-end strategy. When the "perfect" web application no longer relies on a user finding the right button, but on an agent understanding the right contract, the clarity of your code becomes your most valuable asset. The question for every product leader today is simple: When your UI is invisible, does your business still exist?

Frequently Asked Questions

What is the Web Model Context Protocol?

The Web Model Context Protocol (WebMCP) is a browser standard that enables websites to expose structured 'tool contracts' to AI agents. Instead of forcing agents to visually scrape screens to identify buttons and fields, WebMCP provides a logical API layer. This allows agents to interact with websites through defined functions and JSON schemas, resulting in faster, cheaper, and more reliable autonomous actions compared to traditional fragile screen-scraping methods.

How does WebMCP improve AI agent security?

WebMCP mitigates critical security risks like the 'Lethal Trifecta' (access to secrets, untrusted content, and external comms) by enforcing SecureContext (HTTPS) and utilizing a 'Reference Pattern.' This pattern allows agents to manipulate sensitive data—such as bank routing numbers—via opaque IDs without ever seeing the raw information. This prevents malicious tabs from tricking an agent into exfiltrating user secrets, ensuring the agent holds keys rather than the data itself.

What is the difference between Declarative and Imperative APIs in WebMCP?

The Declarative API is designed for standard web interactions, allowing developers to convert existing HTML forms into agent-ready tools using simple attributes like `tool-prop-description`. The Imperative API targets complex, dynamic workflows by exposing JavaScript functions directly to the agent via the `navigator.modelContext` registry. While the Declarative API handles form-filling, the Imperative API enables agents to execute sophisticated logic and multi-step processes without relying on the visual interface.

Stay in the loop

Get notified when I publish new articles and projects.